"They're killing people": Was Biden right about Facebook?

It's all Facebook's fault or don't shoot the messenger, where do you stand?

President Joe Biden

On Friday 16th July, a reporter asked President Biden, "what's your message to platforms like Facebook?".

Biden thought for a second or two: "you're killing people. The only pandemic we have is amongst the unvaccinated."

On Monday, the President backed away from the words.

When asked by CNN to explain his words from Friday, Biden said, "Facebook isn't killing people, these 12 people are out there giving misinformation. Anyone listening to it is getting hurt by it. It's killing people. It's bad information,"

Biden was referring to data from the nonprofit Center for Countering Digital Hate in a report published in March. The report, called "The Disinformation Dozen", listed a dozen people on Facebook as the super-spreaders of anti-vaccine misinformation, accounting for 73% of anti-vax misinformation.

The March study analyzed content on social media that was shared or posted over 812,000 times between February and March. They uncovered how a tiny group of determined anti-vaxxers was responsible for the vast majority of vaccine disinformation.

The report also showed how the social media platforms could fix it by enforcing their own standards!

Biden's point on both Friday and Monday was that Facebook (and the other social media platforms) were not doing enough, if anything, to stop the spread of misinformation.

This is not new ground for Biden when it comes to criticising Facebook.

During the Presidential election campaign last June, Biden wrote an open letter to Facebook CEO Mark Zuckerberg and said, “Tens of millions of Americans rely on Facebook as a news source....... and the company continues to amplify misinformation".

Back then, Biden's focus was on election misinformation and the social media platform's willingness to accept advertising revenues from any source in return for targeting ads and content at voters.

Was Joe Biden right then, and is he right now?

In the last week, Mark Zuckerberg has spoken about the President's comments in an interview with Casey Newton. Zuckerberg said,

“it’s a little bit like fighting crime in a city. No one expects that you’re ever going to fully solve crime in a city.” Mark Zuckerberg

I have my own (strong) opinion on this issue, and full disclosure, I am biased against Facebook.

However, putting my own bias to one side, I went in search of some data points to try and answer this question.

First, I ran a poll on LinkedIn over the weekend. It was seen by nearly 14,000 people and this is how they voted.

Ed Note:My bias is shaped by reading Zucked by Roger McNamee and The Four by Scott Galloway. At the moment, I am halfway through The Ugly Truth by Sheera Frenkel, and this hasn't said anything that would change my POV!

These books are from reliable sources, IMHO, and none of them paint Facebook in a good light...other than as a totally awesome business model.

Facebook has an awesome business model

In 2020, Facebook revenue was almost $86 billion with an operating margin of c38%.

Not bad for a service that is "free".

Facebook has more reach and influence than any other organisation, institution, or government in the world! Around a third of the world is on Facebook, which is 2.7 billion people.

And 1.8 billion of them use Facebook every day. (Source: Facebook data)

To put that into context, that's more than the total populations of either of the two most populous nations, China (1.4 billion) or India (1.3 billion).

Or more than any of the largest religions in the world; Christianity at 2.3 billion people, Islam at 1.8 billion, or Hinduism at 1.1 billion people.

When you break the demographics down, 4 in 5 users of Facebook are aged 45 or younger, with women (54%) out-using Facebook compared to men (46%).

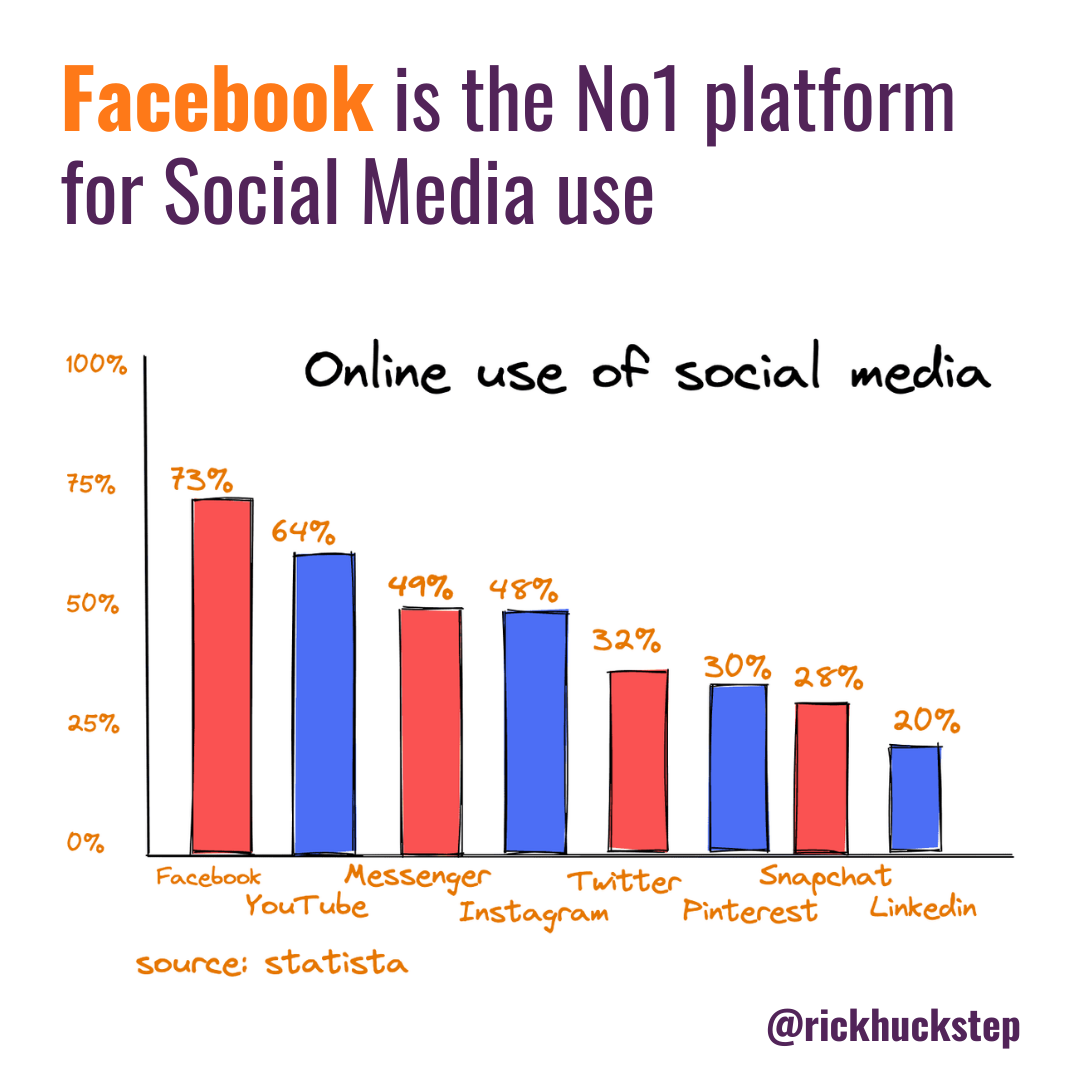

Taking a closer look at the US demographics, around 7 in 10 Americans are on Facebook, and around half of them use Facebook "more than once a day", according to research from PewResearch and Statista.

Interestingly, when surveyed in 2018, around three-quarters of Facebook users were not aware that the site lists "their traits and interests for advertisers".

When made aware of this, 51% of users were "not comfortable with Facebook maintaining this kind of list."

Coming back to the point of this article, the stat that is most relevant to the Biden question is (according to Reuters),

36% of all US adults get news from Facebook.

Misinformation and public opinion

A study by the online advocacy group Avaaz found that Facebook could have prevented an estimated 10.1 billion views of the most prominent pages that repeatedly shared misinformation relating to the US presidential election.

The study also found:

The top 100 most popular false or misleading stories on Facebook, related to the 2020 elections, received an estimated 162 million views.

Although each of the 100 stories had a fact-check publicly available from an organisation working in partnership with Facebook, 24% of the stories had no warning labels to inform users of falsehood.

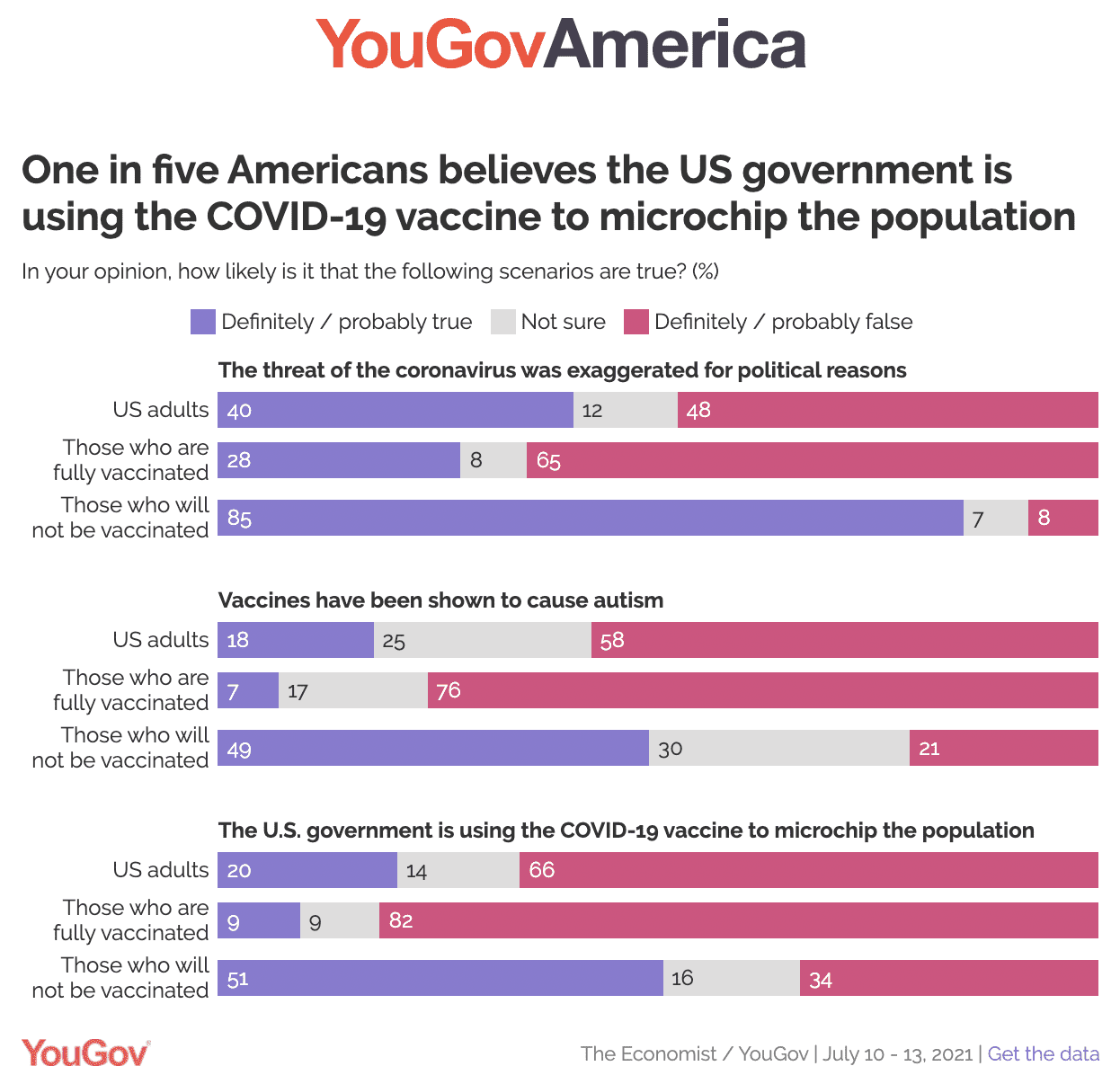

When it comes to Covid misinformation, a survey by YouGov America published this week, found that 18% of Americans will not get vaccinated and a further 11% are unsure about it.

They also reported a significant split in the left and right of political demographics, with only 4% of Democrats saying they won't take the vaccine, compared to 29% of Republicans. (You can draw your own conclusions on that data point.)

The survey sought to understand why there is such a high percentage of American's that won't vaccinate and found 2 primary reasons;

half think it is likely that vaccines cause autism, or

they thought that this vaccine is being used by the government to microchip the population.

51% of Americans who will not get vaccinated think that the US Gov is microchipping the population!

Nowt as funny as folk!

Facebook fights back

After Biden's criticism of Facebook on anti-vax misinformation, the Facebook newsroom went on the offensive.

Lead by the VP of Integrity (now that's a job title I've never seen before), Facebook went after Biden, claiming that the President was trying to deflect attention away from his failed vaccination program.

Ed Note: Politically, Facebook may come to regret such an aggressive response given that (a) they never did anything like that to Trump, and (b) the Democrats could be in theWhite House for another 7 years. With anti-trust and "break up BigTech" gaining momentum, now may not be a good time to poke the bear!).

In their rebuttal statement, Facebook urged the President to focus on "facts, not allegations". Some might see the irony here, but I couldn't possibly comment!

Unfortunately, Facebook haven't released all the facts that would inform the debate. In the press release, Facebook said that 2 billion people had viewed accurate information on vaccines.

But they failed to disclose how many Facebook users had seen the inaccurate information on vaccines. (And don't think that they don't know what that number is!)

Which brings us on to CrowdTangle.

CrowdTangle: the secret to winning Facebook

Fast Company once called CrowdTangle, ‘the secret… to winning Facebook.’

CrowdTangle is an app that Facebook acquired in 2017 and is widely considered to be a game-changer for journalists in the digital age. This platform is an invaluable tool for local news, agencies, and pretty much any other company that needs to keep track of new happenings in social media.

In fact, it has been so successful that it is being used against Facebook. So they've just announced they're shutting it down.

CrowdTangle works by aggregating all meaningful content across Facebook, Twitter, Instagram, and Reddit posts. It then allows a news agency or media manager to break the data down and interrogate it by keywords, metrics, and even arbitrary tags.

The outcome is phenomenal for social media managers (and news manipulators) who want to understand what is being said across multiple social media platforms so that they can fine-tune their messaging to be on "on trend".

New York Times report, Kevin Roose has used CrowdTangle data to create a Twitter account called @FacebooksTop10.

Every day, Roose posts a Top 10 list of the link posts with the highest engagement on Facebook.

The lists have regularly shown how much engagement right-wing news gets on a daily basis compared to everything else.

The point that Roose makes about Facebook is that it contains a giant right-wing echo chamber, with a hyper-engaged audience of loyal partisans who love liking, sharing, and clicking on posts from right-wing pages, many of which have gotten good at serving up Facebook-optimized outrage.

CrowdTangle has enabled and shaped years’ worth of coverage of misinformation, especially since Facebook acquired it. The tool offers unique access to trending topics, public accounts and communities, and viral posts on Facebook, Instagram and Reddit that would otherwise be largely inaccessible.

It is also important understand that CrowdTangle isn’t independent.

As First Draft has written about before, platforms’ interests are baked into their analytics tools. Facebook has a history of fiddling with its data, which has always raised serious questions about our dependence on it as misinformation experts.

Content Moderation (or lack off)

This example from ProPublica, written a year ago at the start of the pandemic, demonstrates how a Facebook group can influence and "educate" a community of users with a common interest.

In this case, the Facebook group is for Donald Trump-supporting firefighters and paramedics. When Donald Trump was calling covid a hoax and the China flu, the Facebook group accepted this and amplified it.

The point is that this piece of misinformation (that Covid was a hoax) went unchecked by Facebook. There was a common bond (trust) amongst the 27,000 firefighters and paramedics in the group, which allowed the falsehood to spread and influence.

How misinformation starts

In this article for Popular Information by Judd Legum and Tesnim Zekeria, they share one of the ways in which Facebook is used to manipulate real news and give it a spin.

It's a long article and well worth a read. It explains how legit news media sites have their stories taken and reposted with a new headline that is written to appeal to a target audience.

The new headline often massively misrepresents the original content, which is largely unchanged. The biggest example of this is The Daily Wire, founded by right-wing pundit Ben Shapiro.

"Despite having only a few dozen employees and producing little original reporting, The Daily Wire attracts far more engagement on Facebook than media outlets like The New York Times, which employs thousands of journalists and regularly breaks major stories." Judd Legum

Nearly all of the top stories from The Daily Wire were aggregations of another news report, a tweet, or a video. The Daily Wire takes another outlet's reporting, excerpts it, and gives it an inaccurate or incendiary spin.

Here's how it works.

A credible newspaper writes a story.

A media outlet takes the story, spins it, and pushes it back out with a headline designed to trigger their target audience.

The spun article gets a ton of engagement (shares, comments, likes).

The Facebook algorithm rewards posts with higher engagement.

Highly engaged articles attract more advertising revenues.

In May 2021, stories from The Daily Wire attracted 70.9 million engagements, while The New York Times' stories attracted 15.3 million engagements, according to the social media analytics firm NewsWhip.

Facebook's Oversight Board

The Facebook Oversight Board was established late in 2020 as an independent body to oversee all of their social media platforms. It is often described as a kind of Supreme Court for Facebook.

Content moderation decisions, for instance, removing or not removing a particular post, made by Facebook and Instagram can be appealed to the board. Facebook says that decisions made by the board are final.

The board is a court-like entity for appealing content decisions across all of Facebook's platforms. It's made up of 20 experts in areas like free expression, human rights, and journalism.

Included among the 20 current members of the board are notable individuals from around the world, including

Helle Thorning-Schmidt, former prime minister of Denmark;

Alan Rusbridger, former editor-in-chief of The Guardian; and

Tawakkol Karman, a Nobel Peace Prize laureate who promoted non-violent change in Yemen during the Arab Spring, a movement in which social media played an important role.

The board is designed to be independent of Facebook, according to its charter.

The decision to ban former President Donald Trump from Facebook until 2023 was made by the Oversight Board, not Facebook itself. Trump responded by saying he'll not be inviting Zuckerberg again to the White House for dinner next time he's President. (Trump and Zuckerberg had an "undisclosed" dinner at the White House in 2019).

Some things you probably didn't know about Facebook

1) Facebook is primarily blue because Mark Zuckerberg has red-green color blindness.2) You can’t block Mark Zuckerberg on Facebook.

3) The most-followed celebrity on Facebook is Cristiano Ronaldo with 148 million fans.

4) The average number of Facebook friends is 338.

5) 30% of users say they’re connected to people they’ve never met in person.

6) There is an estimated average of 3.57 degrees of separation between any two people on Facebook.

7) Facebook stores approximately 300 petabytes of user data on its servers.To put all of that into perspective, if only one petabyte comprised songs, it would take 2000 years to play them back to back!

8) 57% of customers claim that social media influences their shopping, with Facebook leading these platforms at 44%.

Sources:

Please regulate us, Fast Times

Facebook facts and figures, 99Firms

Religions, World Data

Facebook acted late, Time Magazine

Facebook misinformation study, Avaaz

What Facebook gutting CrowdTangle means for misinformation, First Draft

Facebook Now Says It’s the Solution to the Crises It Created, NewRepublic:

What is the Facebook Oversight Board, CNN

Inside the Pro-Trump Facebook Group, ProPublica

Great article - everything that generates likes and shares on FB generates revenue for FB and FB will know exactly how much revenue is generated around the anti-vax traffic. They will never stop this themselves because as we know business knows no morals. What certain types of businesses say and what they do are two very different things. It will only ever be stopped by enforced regulation and fines that get their attention, but the trouble with that is that such a regime could only be put in place by those people who want to be popular. So, it goes on. And People die.

Here's another bit of Facebook trivia. Many of my Trump-supporting family and friends proudly announced on FB during the US Presidential election that they were closing their accounts and moving to Parler because of FB's perceived bias against Trump. Fast forward 9 months, they are all still on FB. They never left. They can't stop posting, reposting, liking or commenting. Addictive innit?